Optispan-Apollo

HIPAA-compliant clinical collaboration platform reducing documentation and coordination friction.

Overview

Optispan is a HIPAA-compliant clinical collaboration platform that helps physicians and clinic staff coordinate care while dramatically reducing documentation burden.

I led 0–1 product design across provider and patient experiences, defining AI-assisted documentation, file ingestion, task and notification systems, and patient profiles; collaborated closely with clinicians and engineering team to establish human-in-the-loop AI workflows and scalable interaction foundations.

Responsibility

Solo Product Designer

Provider and Patient experiences, Desktop + Mobile experience

Focus

Human-in-the-loop AI Workflows, File Ingestion, Tasks/Notifications System

Timeline

2025.6 - 2026.1, 7 months

Collaboration

Project Manager, Product Manager, 5 Engineers, 3 Internal Clinic Users, 3 Customer Users

Impact

90->30 min

Note time reduced

-65% ↓

Manual entry reduced

92%

Customer user satisfaction

Problem - Predesign situation

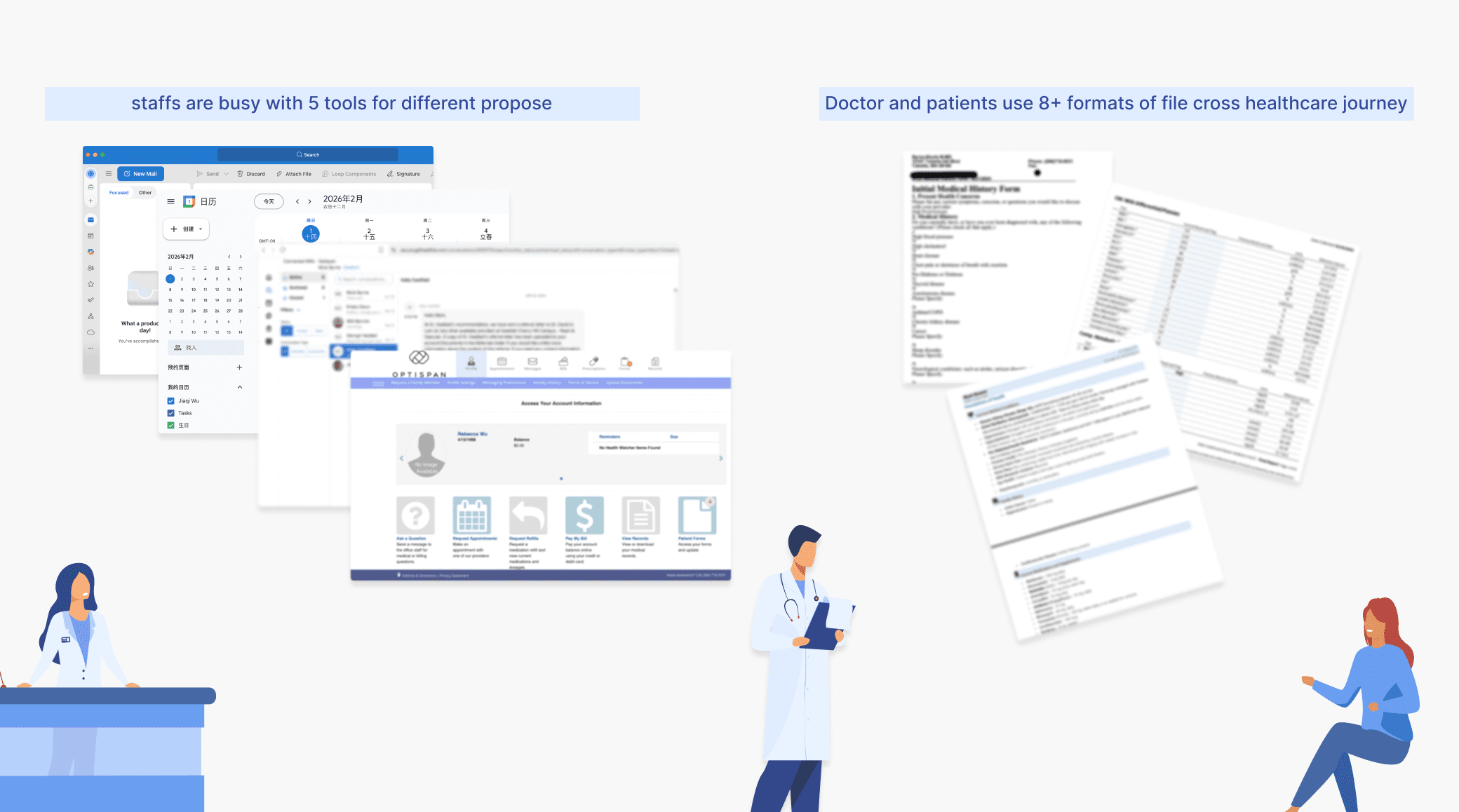

Fragmented data and tool sprawl break clinical workflows

Current System Problem

Image

Fragmented data increases clinical risk

Patient information lived across PDFs, Labs, IoT, and manual entry.

Tool sprawl breaks care coordination

linical work flow cross 5+tools (EHR, ZOOM, phone call, email, Heathie)

Solution Overview

Designed an end-to-end AI-assisted workflow that integrates documentation, data ingestion, and task coordination while preserving human control and auditability.

SOLUTION highlight/1

A user-owned data foundation from files to clinical context

I identify the friction in backend-supported file handling workflows and proposed a user-facing file system, treating documents as first-class objects with visible AI-generated tags, and review states.

Feature: File Upload

Image

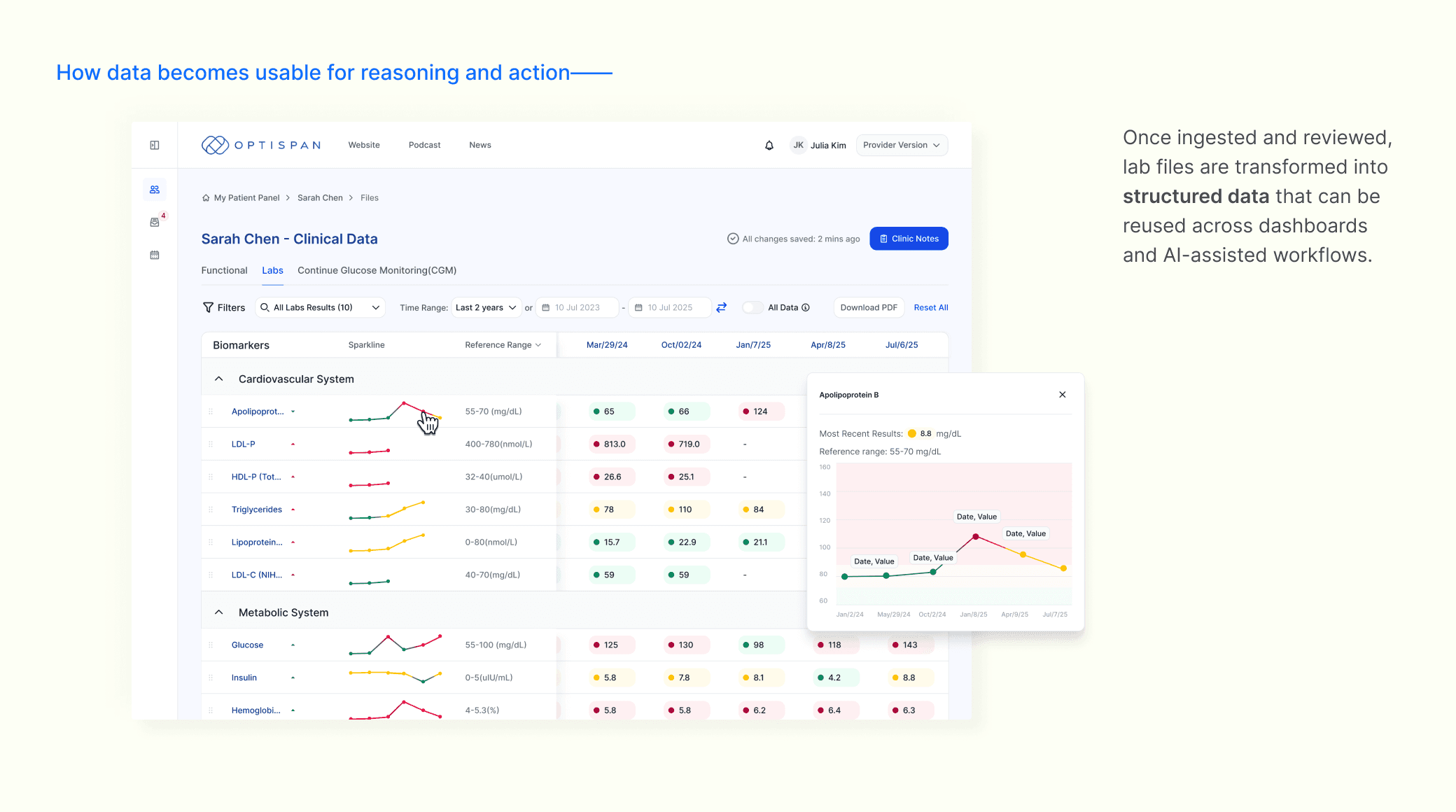

I structured data from uploaded files powered an 80+ biomarker dashboard and served as a shared foundation for both clinic decision making and AI-assisted documentation.

Feature: Clinic Data-Labs

Image

SOLUTION highlight/2

Explicit human-in-the-loop boundaries for clinical AI

With structured data as the foundation, I positioned a Clinic AI copilot as a collaborative layer in documentation rather than a decision-maker. By defining explicit human-in-the-loop boundaries and review states, clinicians retained control and accountability while benefiting from meaningful efficiency gains.

AI-generated Clinic Note flow with human-in-loop

Demo

SOLUTION highlight/3

State-driven task governance across Clinic and Patient Portals

As the platform scaled in real clinic workflows, coordination between clinic staff and patients became a reliability risk.

I mapped cross-portal transition points and established a shared lifecycle model to clarify ownership, timing, and escalation.

Working with clinicians and engineering, we centralized task governance across portals, increasing completion rates to 80% and reducing care-stage friction.

End-to-End State Synchronization Across Portals

When a clinic assigns a task, the state transition instantly to the patient portal and updates clinic dashboards upon completion. Both portals operate on a single source of truth.

An end-to-end task synchronization across portals

Demo

From Isolated Feature to Lifecycle Governance

Rather than designing tasks and reminders as isolated features, I defined a task lifecycle model shared across portals. All state mutations flow through structured governance rules, ensuring synchronization, visibility, and accountability.

Task lifecycle model shared across portals

Diagram Motion

Key iterations

Iteration 1: Human-Initiated AI Refresh

The initial system automatically regenerated clinical notes as data arrived, creating false confidence and eroding clinician trust——Trust break because the system acted without clinician intent.

Design Reframe ——

By mapping human and data touchpoint across the clinical workflow, I reframed the problem from “How can we use AI?” to “How should AI act under human intent?”

Diagram:Data Arrival Impact Map

Image

Key Design Shift

Through iterative mapping, I aligned design, data, and engineering around a shared principle: AI should act only under human intent.

The system supports this through readiness signals—such as data completeness and key data availability—helping clinicians judge when AI assistance is appropriate.

Key Design Iteration: From System-Driven to Human-Intent–Driven AI

Diagram

The final experience reflects this shift through lightweight AI update notifications. Clinicians are informed when new data is available, while remaining in control of if and when AI updates occur.

Final Experience: Human-Initiated AI Refresh

Demo

Iteration 2: AI Ingestion Fallback & Recovery

AI-assisted tagging was introduced to scale file ingestion and support downstream automation.It's a low-risk but high-volume AI surface.

Design Exploration - Three Upload Models

We explored three models and trade-offs between scalability, reliability, and operational clarity. Finally we choose the full-AI Tagging.

AI Ingestion Models — Design Exploration

Diagram

Key Design Decision & Iteration: AI-Gated Ingestion with Recovery

While full AI automation improved ingestion efficiency, it exposed a system-level risk: file-level failures could block entire uploads. By analyzing edge cases and collaborating with engineering, I redesigned ingestion into an AI-gated model with partial success, file-level recovery, and clear status visibility.

Key Design Decision: From Batch Blocking to File-Level Recovery

Image

The system allows users to proceed despite AI failures. Failed files are isolated and surfaced later, enabling recovery route.

Final Outcome: Recovery-First Ingestion at Scale

Demo

Retrospective

Lessons / Next step

My next step includes:

Scale validation across contexts and surfaces to real patient journeys and mobile touchpoints. Evolve clinician-first patterns into consumer-friendly experiences, reducing cognitive load.

Formalize interaction patterns into a scalable design foundation, to support faster iteration and consistency cross 2 user portals.

lesson 1 - AI interaction design

Trust in AI systems is shaped more by how uncertainty and control are expressed in the interface than by model accuracy alone.

lesson 2 - Data & system design

Design data as a shared interaction layer, made it possible to support visualization, AI reasoning, and task workflows without duplicating complexity.